BY ROBERT DOCTER –

Questions for the 21st Century

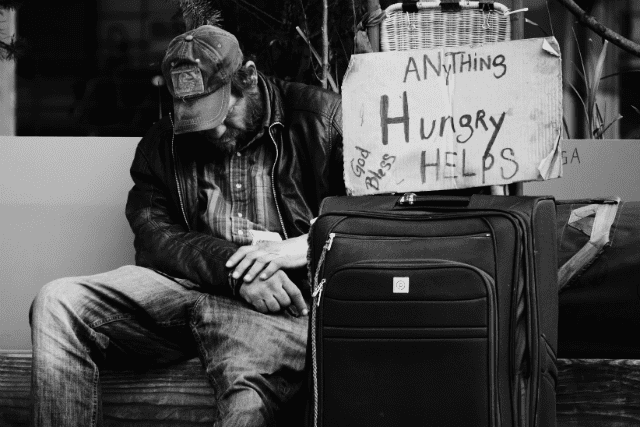

We’re about to start a new year. It’s time we addressed a particularly thorny matter. I’m not sure that the Army’s current organizational/decision making model will keep it effective through the 21st century. I want to raise some process questions concerning how the organization works.

Does The Salvation Army want to have sufficient data and information for long range planning? Does it have the knowledge and skills to evaluate what it is currently doing? Are we using our resources effectively? What do those who participate in our programs believe to be our strengths our weaknesses? What parts of this Army must be preserved for us to remain what we are? Are we organized to engage in profound change of those parts that we determine to be vulnerable? What might we gain or lose if we do?

Somewhere, I envision a knowledgeable, committed, consecrated group of people exploring these questions. Maybe it’s happening somewhere. If so, I don’t know where it is. I’ve engaged in many discussions like this usually over coffee after a meeting. I even participated in such a discussion in a formal manner about ten years ago when some of those same questions were raised. Interestingly, some significant change took place but then it died. I never learned why.

I wonder why we don’t have our own “rand” (research and development) organization to help us with some of these questions the big questions, like those above, and the little questions, like why is this program successful and that one isn’t and what is “success”?

I don’t think we’re doing too well even on the little questions. To my knowledge, there are only a very few employees and even fewer officers in the Army charged with primary responsibility either to engage in the process of evaluation or to assist others in engaging in that process.

Oh, I know we have boards and councils at the divisional and territorial levels that are required to review and approve programs, but for the life of me, I don’t know what criteria they use to make their judgments. Too often, I fear, they are limited to criteria pertaining to our mission or to available resources. Within those limitations, the evaluative questions they would ask are: (1) does it “save souls, grow saints or serve a suffering humanity without discrimination?” and, (2) do we have the right people, a good building in the right location, and sufficient money for the program to support itself? This is a “goal-based” approach to evaluation. It doesn’t give us much help in understanding how a program really works, its strengths and weaknesses, or the degree to which the program benefits the clients served.

Now, “mission” and “available resources” are important criteria. The organization’s “mission” is critical to the evaluation process. Our goals should grow from that mission. It’s a good mission statement, but very broad. Equally true is the importance of an examination of available resources. If, however, these are viewed alone, decision makers could be led astray. We don’t do decision makers any favor by imposing this responsibility on them in the absence of a sufficient database designed specifically to generate a comprehensive evaluation of the program being proposed or under review.

Many of our social service programs are required by funding sources to report on their progress toward their goals and whether or not they meet certain specific criteria. Additionally, the ARC command engages in an excellent program review. Moreover, I am confident we are achieving facets of our mission in many other areas of endeavor. Often, however, I believe we do not know exactly what we are doing well or what we are doing that inhibits our effectiveness.

Take the manual Evaluating the Harvest, developed by Major Terry Camsey as an “evaluation tool for congregations” prior to a corps review. I wonder if we are getting the maximum potential from this goal-based tool. Corps members, for instance, might explore many of the questions from a process-based or an outcome-based perspective that might lead toward modification of some of the objectives. I wonder who might be available to teach members of a corps how to engage in these activities. I wonder if such an exercise would be helpful. In the absence of data how will we know?

We need to expand and improve our program evaluation. It’s not enough to evaluate the quality of a program on the basis of a hunch. I think, all too often, we use this technique and then make decisions about the program often based on reputation or personal criteria. Somehow, it’s decided to support it, fund it, expand it, or close it. I don’t think this is in the best long-range interests of the Army or of the people we seek to serve.

So where do you agree disagree? Lemme know.